If you’ve been in the infosec industry for at least 10 years, you might remember a hubbub in 2010 over someone dubbing security researchers “Narcissistic Vulnerability Pimps” or NVPs. Confession time…that was me. At that time, part of my job was keeping customers informed about developments that altered their cyber risk posture, and I was, quite frankly, more than a little tired of the “drop a vuln at a conference for the lulz” trend without regard for how it impacted community risk. Since then, I’ve grown up, mellowed out, gained experience, done a lot of research, and come to a place where I’m willing to allow that dropping vulns and exploits regardless of whether patches are available *might possibly* – under certain circumstances – be beneficial for reducing risk.

Why the (possible) change? It’s been a journey, but I was recently confronted by evidence from a report we released with Kenna Security that forced me to reevaluate my presuppositions.

In “Prediction to Prioritization Vol. 6,” we had the opportunity to do something I’ve wanted to do since the NVP kerfuffle back in 2010 – measure the effect of vulnerability disclosures and exploit development on attacker-defender dynamics (aka risk). This is difficult to do in practice because you need a bunch of information on key milestones in the lifecycle of vulnerabilities, lots of data on vulnerability remediation efforts within organizations, and also large-scale visibility into detections of exploit activity in the wild. Until P2Pv6, we’ve never had all that data together in a comparable format. I thought having it would answer all my questions. Unfortunately, it just served to prompt more questions…such as the one in the title of this post.

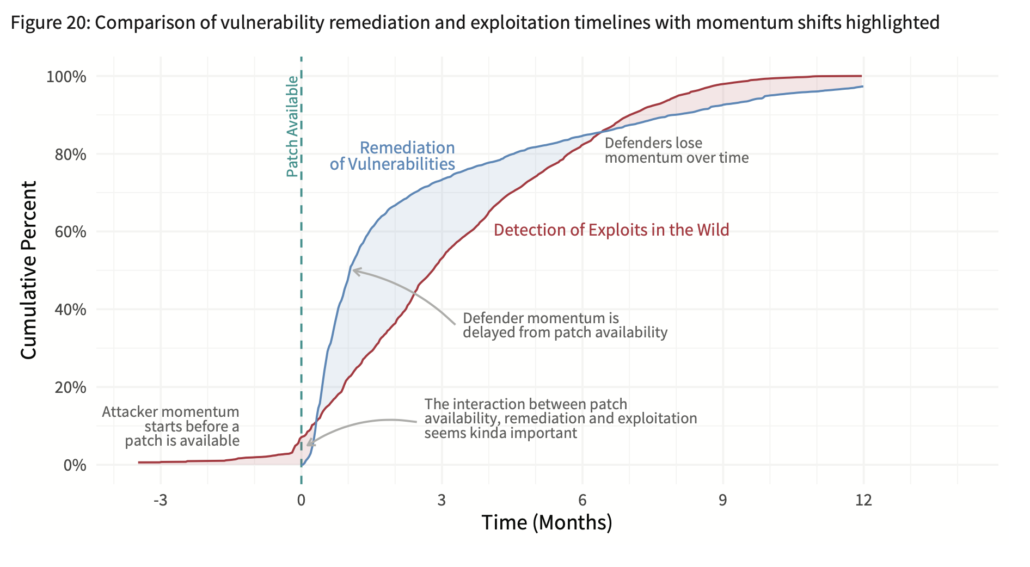

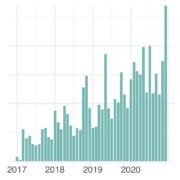

Enough background; let’s look at the data. The blue line in the chart below shows the average time-to-remediation for vulns across several hundred organizations. The red shows the spread of exploitation attempts from the first organization that detected related activity to the last. An earlier post on this chart goes into more detail, but the key takeaway to my mind is that defenders control the momentum for a respectable amount of time after a patch becomes available.

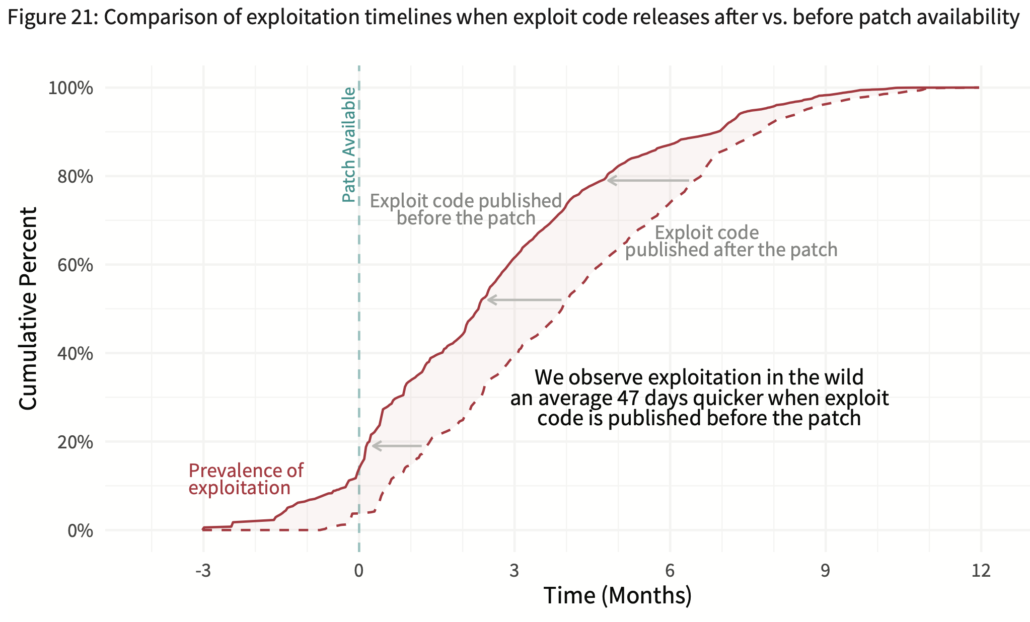

Those red and blue lines are an overall average and the attacker-defender divide is not set in stone as the chart above might suggest. For instance, let’s look how much one minor change – exploits published after vs. before a patch – impacts the exploitation and remediation timelines. The difference in the chart below for these two scenarios is unmistakable. The exploitation timeline shifts left an average 47 days earlier when an exploit is released before the patch becomes available.

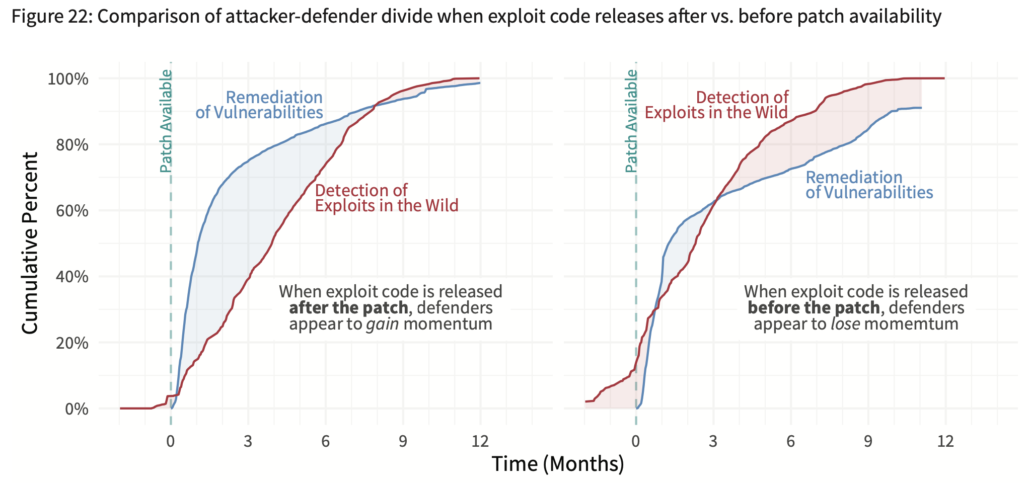

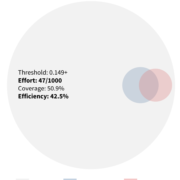

The charts below show the result of this shift on attacker-defender dynamics. The left chart, where patches predate exploits, shows an ample period of time during which defenders have the advantage (about 8 of 15 months). That period of advantage is drastically reduced in the right chart when exploits drop before a patch is available. The net result is that attackers now control the momentum for a much longer period of time (12 of 15 months).

At this point, you might be thinking this is an open-and-shut case that those who disregard coordinated disclosure and drop exploits before patches are available deserve the moniker of NVP rather than MVP. But hold the phone…

One possible interpretation of this figure is that early exploit release increases community risk by giving attackers a big head start that puts defenders continually behind the exposure curve. That’s the position I’ve long held.

But another possible interpretation is that releasing exploits early enables earlier detection of exploitation in the wild. Exploit code is often used for creating IPS, AV, and other signatures to detect exploitation of vulnerabilities. Perhaps the 47-day shift in exploitation timeline in the chart above is really just moving up the timetable of defenders being able to detect that activity. In this scenario, exploit developers could be considered the MVPs of the security community because they help us see the reality of the situation – exploitation is already happening. We just don’t know it yet and therefore can’t detect or respond to that exposure.

That leaves us with two plausible hypothesis about the ramifications of releasing exploit code before patches are available:

- It helps defenders by contributing to earlier detection.

- It helps attackers by contributing to earlier exploitation.

We discuss these hypotheses in depth at the end of the report and detail the data needed to properly test them. Perhaps you have access to some of that data and would like to contribute to the effort. I’ve stated my historic biases, but I’m willing to modify them accordingly based on which hypothesis is supported most strongly by the evidence. Basic science will tell us whether dropping exploits before patches is behavior befitting the NVP or MVP badge.

For now, the debate goes on, but at least we have a little more data on which to drive that debate. Where do you stand is releasing exploits before patches the work of NVPs or MVPs of the security community?

I’d like to clarify that this report/post isn’t about the disclosure of vulnerabilities. My interpretation of results from this report is that, overall, vulnerability disclosure is much improved and working as it should to coordinate the timing of vulnerability information and patch availability. The figures and discussion above focus specifically on exploit disclosure. That’s different from the old full vs. responsible disclosure debate that was mainly about how to get vendors to take action to fix their code and release a patch in a timely manner. What we’re discussing here is more about what my friend, Ed Bellis, terms “responsible exposure.” In other words, how and when should exploits be released to minimize the risk to defenders? Moving (or broadening) the focus from vendors to defenders would contribute to a more productive conversation.

Leave a Reply

Want to join the discussion?Feel free to contribute!